In an Adversarial Research Engagement (ARE), human expertise iteratively directs the most thorough level of AI analysis. Ostium recently underwent an ARE to get directed security coverage across its entire risk surface and all findings delivered with runnable PoCs.

Ostium Escalates to ARE: Deeper Security Coverage With Researcher Directed AI

In an Adversarial Research Engagement (ARE), human expertise iteratively directs the most thorough level of AI analysis. Ostium recently underwent an ARE to get directed security coverage across its entire risk surface and all findings delivered with runnable PoCs.

Introducing ARE: Adversarial Research Engagements.

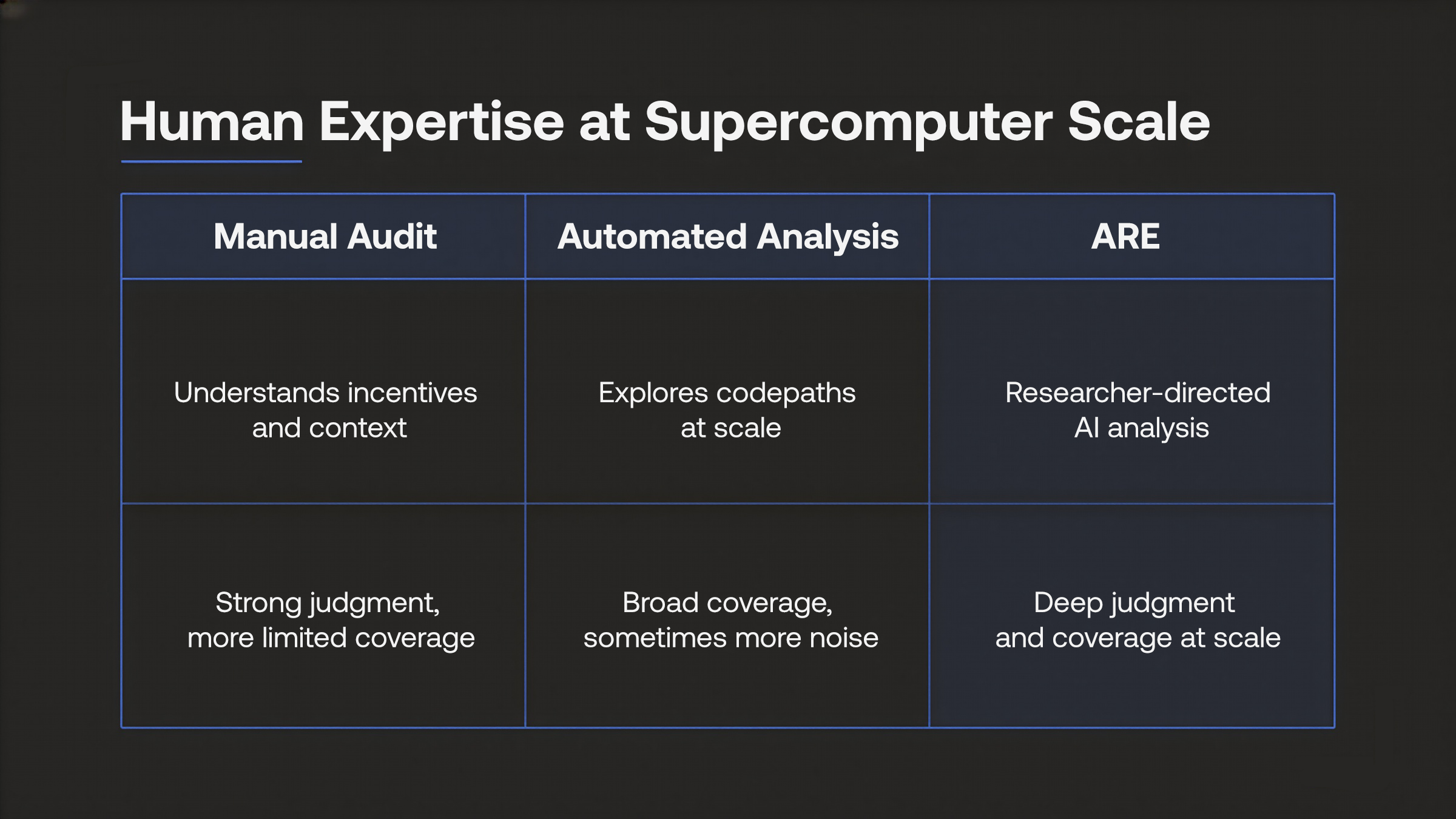

Adversarial Research Engagements collapse the manual vs. automated binary into a single model: researcher-directed iterative AI analysis.

Adversarial Research Engagements (ARE) leverage AI where it works best: as a force multiplier for human expertise, judgement, instinct, and real-world contextual understanding. This combination produces the most thorough security analysis available today.

Today’s AI reasons exceptionally well in structured settings, but it is less reliable in the messier, multi-actor, incentive-driven dynamics where emergent behavior matters, and where small misunderstandings produce confidently wrong answers. This is where human expertise still shines.

AI is the decisive factor when working with large codebases, exploring edge cases, generating and testing hypotheses at scale, and relentlessly pursuing the long tail of possible execution paths that human auditors simply do not have the time or attention to cover exhaustively.

Octane’s recent Monad audit contest win underscored the same point: AI analysis is especially effective when the codebase is large and the execution space is too broad for manual review alone.

Artificial Intelligence excels at this relentless breadth of coverage.

Now, the human element of an ARE brings it direction.

Ostium’s Experience with ARE

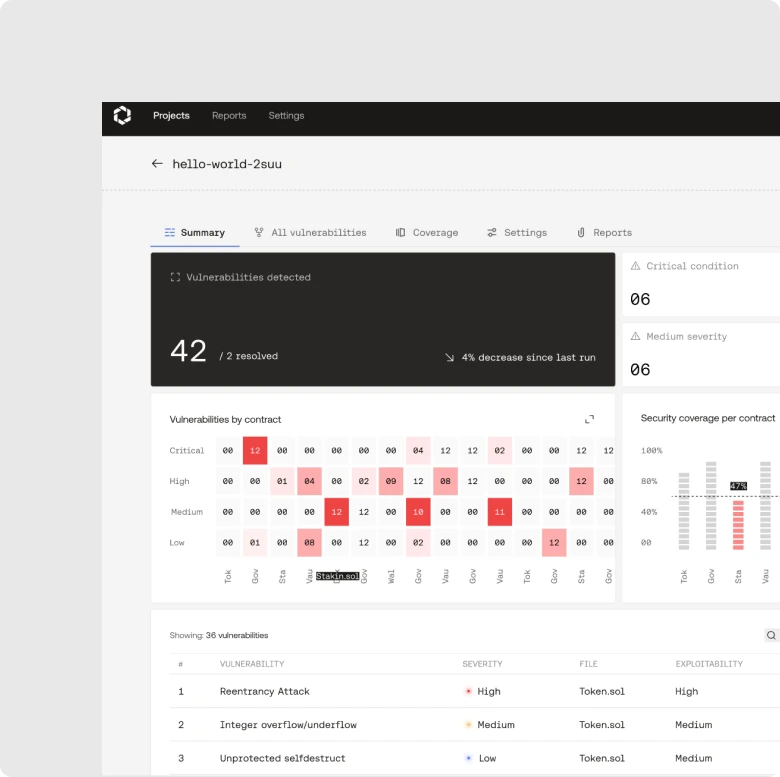

Following on from Ostium’s integration of Octane into its CI/CD pipeline, we collaborated with the Ostium team on an ARE, conducting a full-surface security analysis of the protocol deployed on Arbitrum One.

Ostium’s engagement covered the full protocol: vault settlement accounting, oracle integration paths, trade execution flows, async deposit/withdrawal mechanics, funding rate calculations, and upgrade lifecycle patterns across 11 upgradeable proxy contracts.

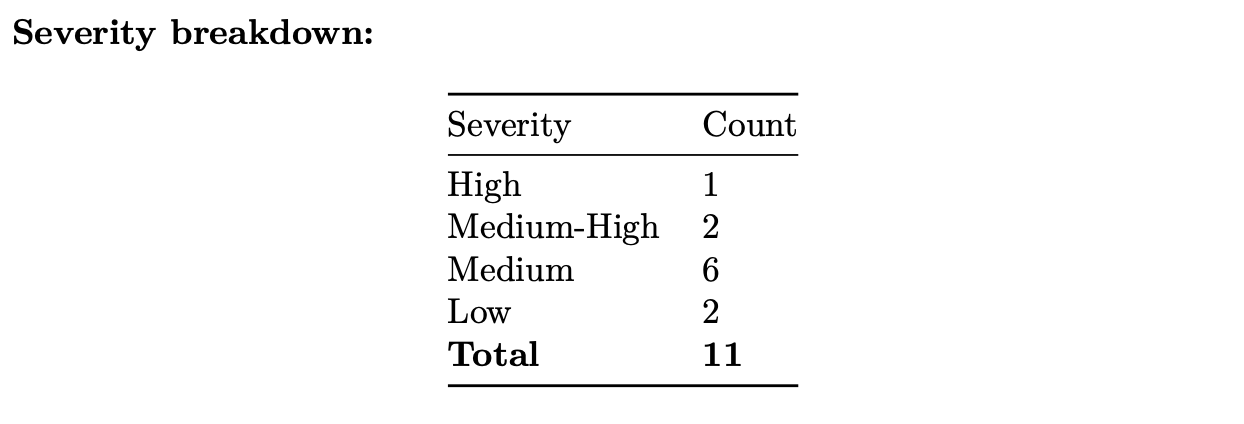

This ARE identified 11 confirmed vulnerabilities:

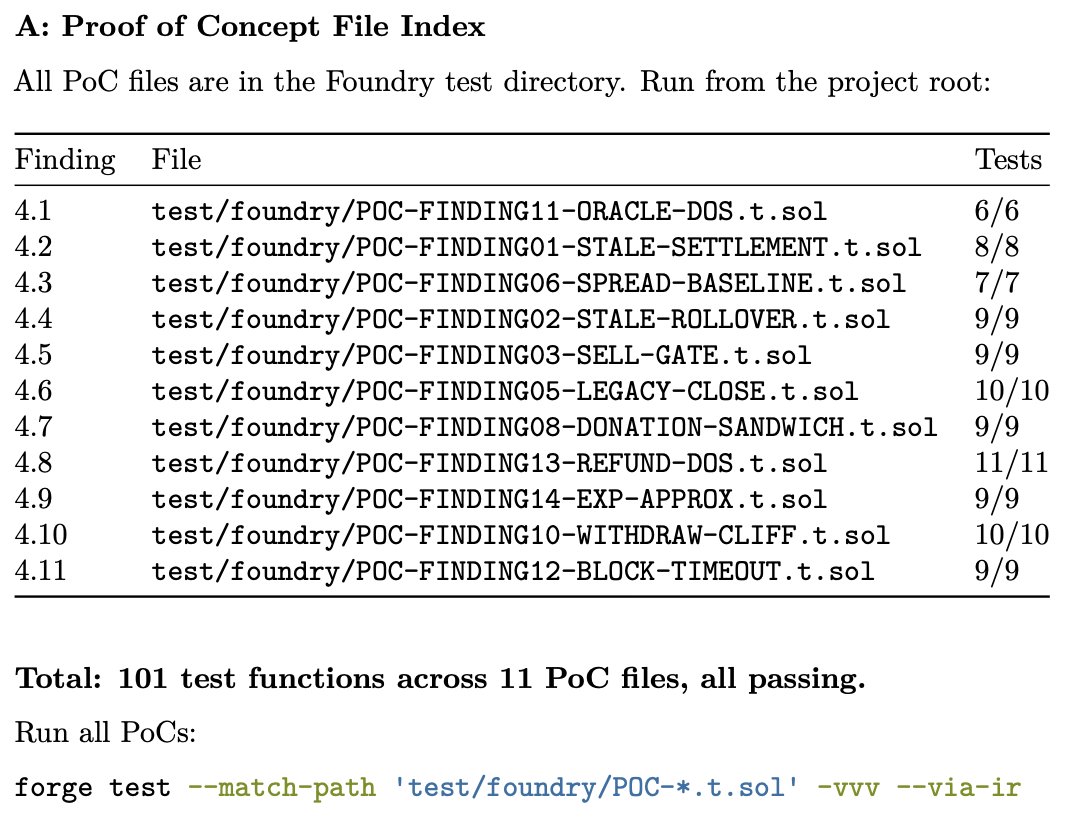

Each was validated with a dedicated proof-of-concept. Across 11 PoC files, 101 test functions provide dynamic proof for every finding in the report. No finding appears without runnable, passing tests. This stands in contrast to the industry norm, where findings are typically accompanied by theoretical descriptions rather than executable proof.

All Adversarial Research Engagements are bundled with PoCs that prove the validity of Octane’s analysis for critical, high, and medium severity findings.

The same analysis also produced a set of hardening recommendations: proactive improvements that improved the threat model for the CI/CD. These are meaningful adjustments to the context the Octane agents operate within to inform better decision making around vulnerability detection and confirmation – therefore boosting signal across future automated AI analyses.

“Octane’s AI-native ARE gave us a level of security coverage that would be difficult and expensive to replicate with manual review alone. Having a runnable PoC for every finding made the results easier to validate, faster to act on, and more useful for strengthening Ostium’s security posture going forward.”

– Marco Antonio Ribeiro, CTO and Co-Founder of Ostium Labs

Ostium is among the earliest teams to adopt the ARE model in production. Putting a complex live protocol through researcher directed AI analysis reflects a serious commitment to advanced security practices that produced concrete results.

By front-loading the deepest security analysis against protocol-specific attack vectors, ARE greatly reduces rework stemming from later review cycles. An early ARE makes downstream CICD analyses faster, more focused, and more useful.

How It Works

An Adversarial Research Engagement treats security analysis as a question of direction.

First, expert human researchers define the context of the system under review – its general architecture, threat model, and economic environment – and use that understanding to frame the initial attack surface. This initial stage is done in close collaboration with the client.

From there, AI takes over the work of exploration. It ingests and maps large codebases, traces through execution paths, and reasons about potential edge cases or invariants. Instead of sampling a subset of possibilities, as manual auditors must, the automated approach can systematically navigate large portions of the program’s execution space, generating candidate hypotheses about where vulnerabilities might exist.

These signals are then evaluated by the human researchers. Some are discarded as noise; others lead to deeper paths of inquiry. Researchers use their knowledge, experience and intuition to refine the context, narrow down the search, and direct the next round of exploration toward the most promising paths. This process of refinement and iterative review places a human expert directly in the loop.

The efficiency gains provided by this approach allow for less time on repetitive pattern checking (as AI handles that) and more time on exploring novel paths in the codebase (as humans reason creatively through that). Both the AI and human researcher are left to focus on what they do best, as the other takes care of their blind spots.

The critical distinction is iterative direction. A one-pass automated scan, however sophisticated the underlying model may be, lacks the human-in-the-feedback-loop that makes ARE so effective. Each cycle of human evaluation refines the AI's focus, producing compounding returns on coverage that no static pipeline can replicate.

Depth and breadth become multiplicative.

Escalate to ARE

Security analysis is evolving in the same direction as the systems it protects: toward the integrated application of human and machine intelligence.

Software has grown more complex, more composable, and more financialized. A single protocol may now span multiple smart contracts, blockchains, execution environments, and economic mechanisms that interact in unpredictable ways. The attack surface expands accordingly, and security analysis must evolve to match it.

By combining human strategic reasoning with raw machine intelligence, ARE’s security analysis operates at the level of both depth and breadth that modern systems require. As platforms and protocols grow more complex and economically integrated, ARE's directed intelligence model represents the new baseline for high-value systems.

When security matters most, escalate to ARE.

Schedule your demo today.