Gio Vignone, Founder & CEO of Octane Security, sits down with Lucas Manuel, Co-Founder & Head of Smart Contracts at Phoenix Labs (developer of Spark), to discuss how AI-native security tooling is reshaping their development workflows.

A Conversation With Lucas Manuel, Head of Smart Contracts at Phoenix Labs

Gio Vignone, Founder & CEO of Octane Security, sits down with Lucas Manuel, Co-Founder & Head of Smart Contracts at Phoenix Labs (developer of Spark), to discuss how AI-native security tooling is reshaping their development workflows.

Octane has found one or two of these where I've been extremely impressed, where I'm like, "This is equivalent to a world-class auditor."

Lucas Manuel, Co-Founder and Head of Smart Contracts at Phoenix Labs, developer of Spark and Maker

Gio: I'm Gio, the founder of Octane. I’ve been building the company for the past two, two and a half years. We work really closely with the Spark team for detecting security vulnerabilities autonomously. And this is Lucas from the Spark team. So I'll pass it over to you to give a quick intro.

Lucas: Yeah, thanks. My name is Lucas. I'm the Head of Smart Contracts at Spark. Previously I worked at MakerDAO as a smart contracts engineer and then Maple Finance as the tech lead, and then joined Spark in 2023.

Gio: Cool. So my first question for you is: can you give a high level of what Spark does and how does that create a unique risk surface for vulnerabilities?

Lucas: Yeah, great question. So in a nutshell, what Spark does is it manages assets at a large scale. We basically borrow at wholesale rates from Sky so that Sky can generate a large amount of revenue on a large amount of capital. And then we take those funds and allocate them into different RWA and DeFi strategies to generate a high risk-adjusted return. And we're able to keep the spread between what we're generating in revenue and what we pass back on to the Sky ecosystem.

Gio: Nice. That's awesome.

Lucas: So to answer the rest of the question — the unique risk surface is that we have a lot of integration points and we're dealing with very large amounts of funds. So you could consider us to be a sort of larger target within the DeFi system. A lot of things have to be considered with all of our development cycles.

Gio: What does that volume of funds look like on some time interval — maybe volume over a month or over a day — to give everyone context on how big it actually is?

Lucas: Yeah. Well, I have less of an idea of volume specifically and more like our current TVL. For the Spark Liquidity Layer, which is our automated asset allocation system, it peaked at 4.6 billion, and now it's 2 billion. And then SparkLend, which is our on-chain lending protocol, has a 2.1 billion TVL. But again, that one maxed out at like 6 billion TVL. So, you know, quite a substantial amount of funds in both of these protocols.

Gio: Yeah, that makes a ton of sense. So it sounds like the combination of a very high risk surface in terms of volume of funds, as well as having a lot of integration points to different protocols — that's a unique set of vectors that you guys particularly care about. Maybe looking at those integration points as well: is it mainly the integration points with Sky that you want to make sure you have security over, or are there any other types of really important integration points that you want to make sure you identify vulnerabilities within?

Lucas: Yeah. I mean, there's sort of a larger surface that we deal with than a lot of different protocols, meaning if you develop one specific DeFi protocol, the blast radius of what you're dealing with is very contained — you're the bottom layer. But when you sit above that and you are integrating with multiple different protocols, you have to consider a lot of different angles. And this is — we can get into this more — but I think this is where AI is really going to start to shine: developing extremely broad and deep context across many different smart contracts, rather than isolated to one specific codebase. We can get more into live deployments, source code that's pulled from Etherscan and put into the context of entire integrations that are in production. I think it's a super viable use case.

Gio: Yeah. Maybe getting into that a little — where do you see tools like Octane being most useful today in terms of understanding your security risk profile, and where do you see room for improvement as well for those tools?

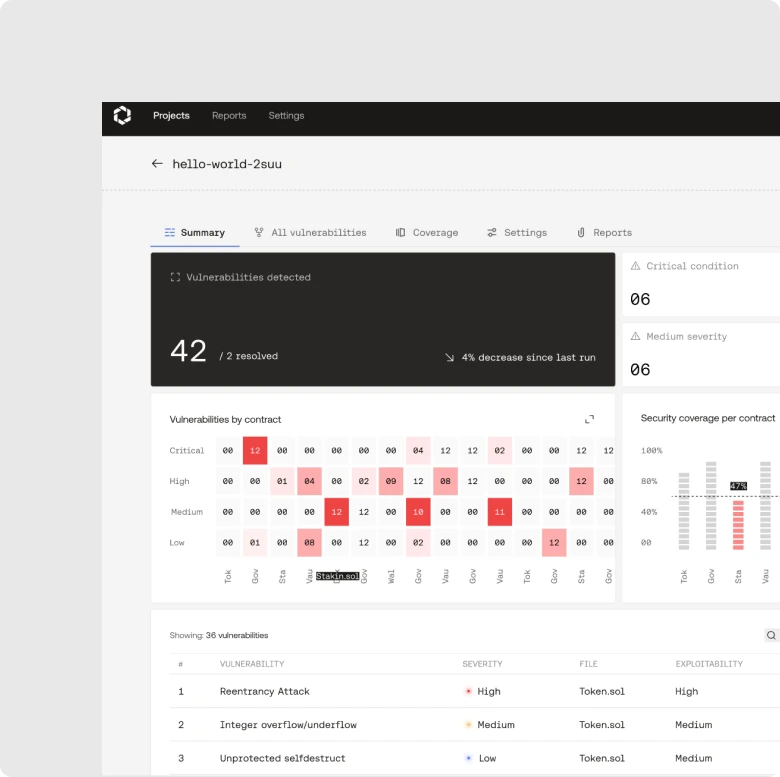

Lucas: Yeah. I mean, the clearest benefit that we've seen today is — not super surprising — but it's just surfacing issues early in the development cycle. Because Octane is running in our CI/CD pipeline for all of our pull requests, very early on in the development lifecycle we're having security-minded issues be brought up and discussed. And what's really interesting about that is usually in a lot of development cycles, you're in the design phase, initial implementation phase, tweaking phase, hardening phase, audit. The audit will surface security-focused issues and then you have that conversation then. But with Octane, those conversations are brought up way at the beginning. And then all of a sudden it's like, "Oh wait, maybe we should be designing our access control logic system differently," or, "We should be documenting our assumptions more clearly."

That's another interesting thing that I think has been made very clear — because we're working off of an AI model that performs better with training, we're actually pushed to create more comprehensive documentation about assumptions and all that kind of stuff much earlier in the development cycle, and then tweak it and iterate on it, because we know that that will give the best results. And an inherent result of that is that we're spending a lot more time having architectural discussions. The whole lifecycle is kind of just enriched by having Octane be part of the PR.

Gio: Yeah, that's super cool. Would you say that that has led to unique outcomes for your team in terms of security? Has that reduced budget spend in certain areas? Has it increased your overall confidence in the security of your entire protocol? How would you say that has impacted overall Spark as a business?

Lucas: Yeah, I mean I think absolutely it's improved our confidence. Another cool sort of anecdotal, vague, subjective thing is it's reduced the number of findings in our audits pretty significantly.

Gio: Sorry — I'm super curious. What does it look like in terms of count, or even just like a finger-in-the-air kind of feeling?

Lucas: Well, yeah. For example, we got a flawless audit back, which is pretty cool. We had just no findings, and I was like, "Wow, okay, cool" — because we'd already been through at that point like 20 iterations of Octane campaigns. So by the time it reaches that point, it's really hardened. And that combined with the fact that it's a smaller scope by the time you're shipping it to audit — you're basically like, "Yeah, this is solid." This is ready. It feels ready for production by the time you submit it for audit. That's what it should always feel like, but it feels that way even more, I guess.

Gio: That's awesome. That's fantastic. Are you able to say which auditing firm you ended up working with that had a completely clean report?

Lucas: I actually don't — I couldn't say offhand. It happened a little while ago, I forget.

Gio: Oh, that's cool. That's really great. Yeah. I think one of the biggest bull cases for Octane that we've seen with teams is they use it continuously and then they'll go to a competition. And although the volume of competitions is lower now, we definitely did see a couple teams that used Octane continuously, went to a competition, got back pretty much no findings. And after having hundreds of thousands of researchers look at it and getting the report back, you both get the really large volume of humans in the loop, and then you also get the AI that has been securing the system over time, which gives a pretty good sense of confidence to the team in terms of the overall protocol being secured. But yeah, that's super cool to hear that you guys got some back with no findings.

Lucas: Yeah, 100%.

Gio: Cool. How do you think AI security tooling like Octane compares to traditional manual audits that you've been used to? What are maybe some of the biggest differences that you see between the two workflows? And how do you see the security landscape as a whole changing?

Lucas: Yeah. So I would say there's almost like three tiers of audit findings. One is just generic output from Slither or something like that — static analyzers. Reentrancy guards, ERC-20 — things that the auditors don't even think about. They just run it through some tool and then populate a report with a lot of findings that were found very easily. Those are easy to address on your own if you know what tools to use, but Octane just makes it an absolute no-brainer. It's just baked in by default.

The second tier findings are more like the things that PR reviews didn't find because they're more security-researcher-focused — meaning they're interesting, and that's like, "Oh, that's why we hired an auditor" — because they have this perspective. And Octane has been quite solid at finding these. And I'll also say there's not many false positives. That's something that's very important. There's true positives that get acknowledged a decent amount, because — and we've talked about this, Gio — it's better for the system to be almost on the side of conservative, like, "We think this is something you should know about." And then that usually leads to conversations internally, which enrich our understanding of the protocol and give us a better sense of what to do next. But anyways, Octane will find very solid issues in this regard — they're security-focused.

And then where there is still a little bit of a gap I'm noticing is that truly exceptional auditors will spend that extra time. And this is the thing about Octane too — it clears the tier one and tier two issues away so that they've already been addressed. And then you get the best auditors to just try and use all their expertise to find some really novel, interesting, different kind of exploit. Those are the two or three issues. Octane has found one or two of these where I've been extremely impressed, where I'm like, "This is equivalent to a world-class auditor." But there is still — not yet, I'm sure soon — but I wouldn't say that there shouldn't be human auditors yet. There's still a need for human auditors, but the value that Octane adds is letting the real value of those human auditors come through.

Gio: Yeah. That's great. For those really unique novel issues — what do those attack vectors look like? What are some of those ones that Octane has found, and then what are the high-level concepts of the super niche stuff that Octane hasn't found, that a super specialist ended up finding way at the end?

Lucas: Yeah, good question. What I've found it comes down to is — and I know this is kind of on the roadmap — but it comes down to external scope knowledge. Meaning, if there's a Uniswap integration and an auditor is really well-versed in Uniswap logic, they can review the code here and understand that it will touch this thing and then make that connection manually. And then also do research and kind of do that stuff. Whereas I think Octane is — I think I still need to learn how to prompt it better on this stuff — but I think Octane is still mainly focused on the scope of the actual code, and just making assumptions sometimes about those third-party components.

Gio: Yeah. We've noticed that a little bit too. And we've seen that as the product has developed, and as teams have started building the right threat models, we've seen those assumptions go down and down. There's definitely a reduction of those. And as teams have brought in more context and as we've done more context engineering, it leads to better outcomes there too. But I think that's a really good point. Maybe we can look at bringing in — or flagging when there's a potential integration with Uniswap v2 that's not in scope even though it might not be clear it's not in scope. So yeah, I think that's a really good point of product feedback that we'll definitely implement. Like surfacing, "Hey, Uniswap v2 — you should consider importing this external library," which we already have pre-loaded in the back end. Something like that would be quite cool.

Lucas: Nice. Yeah.

Gio: Why do you choose to go with Octane over something like ChatGPT, Claude Code Security, Codex, or any of those foundation-model type of tools?

Lucas: Our posture at Phoenix Labs working on Spark is that we're using AI in basically every part of the development cycle, but never relying on it. So what that means is we'll use AI — Cursor, essentially — to just kind of autocomplete and speed up the development process. The human is still completely authoring the PR and reviewing their own code. It's never an agent writing smart contracts or smart contract tests, but autocomplete we're using.

And then we're using AI to generate documentation. That's a super useful use case for us — it's producing a bunch of documentation that we can review and iterate on to make sure it's comprehensive and accurate, but also do that really quickly. So that's super valuable.

We use CodeRabbit for PR reviews in GitHub. So that's for code correctness, test coverage — it's actually decently solid. It's nothing close to Octane, but it's a good first reviewer. And then once all of that's done, we get a human to review, and then we use Octane, and then we merge it.

So I guess my reasoning for using Octane over ChatGPT and Claude Code is — I do like the CI/CD integration, and I do like the confidence of all of the specific security-minded modeling. I've run repos through Opus 4.6 in Cursor to try and find bugs, and it's good, it's quite good, but it almost reviews it like it's JavaScript or something. It doesn't actually understand which ways to think about something. Sometimes, you know what I mean?

Gio: Yeah, that makes sense. Have you tried Claude Code Security, their security product?

Lucas: No, I haven't. Should I try it?

Gio: Yeah. Let me know what you think. I'm curious to see how you think it compares. From what I've seen, comparatively, Octane finds much more niche threat vectors and things more holistically around the actual integration and deployment, whereas Claude Code Security finds more standard vulnerabilities that you would see across the board. It's more in the deployment context, but yeah, super curious to see what you find.

Lucas: Okay. Cool.

Gio: One other question I had — how do you handle the trust verification of the AI outputs? You said Octane is the last step in that workflow — after everybody's reviewed, code is done, then you run Octane for the final check before prod. How do you think about those outputs and how do you confirm that they're real?

Lucas: Well, I mean, to be completely honest, I don't trust any AI outputs yet as final. I think every AI output is a way to augment the development cycle for humans, to get reviewed by humans. At the end of the day, I think for close to every other type of engineering — software engineering — it's getting closer to being able to rely on AI for this stuff. But smart contracts — it's too critical. So humans are always in the loop as a baseline requirement. AI is never explicitly relied on for anything, but it is used as a tool to surface issues earlier and more often, and it's extremely helpful. And it basically makes the human-to-human final stages of review so much more efficient.

By the time someone is reviewing a PR — by the time I'm reviewing a PR at Spark — it's gone through internal self-review with Cursor, CodeRabbit, and basically it's like two other people have already reviewed the PR twice. But that's to get it to the clean state where I'm reviewing it with none of the typical things that come up during a peer review cycle. So it's just so much more efficient and so much more robust.

Gio: Nice. That's awesome. I know you kind of touched on this a little bit earlier, but maybe asking it more directly: where in the development cycle has Octane been the most useful for Phoenix Labs and Spark? Is it the early design? Is it in the pre-audit hardening phase? In the active PR reviews? Is it right before deployment — time verification after solving the auditor fixes? Or is it a combination of all of them?

Lucas: I would say it is a combination, but the most valuable is PRs and pre-audit hardening. Basically what we do is for each PR that we write, Octane will review it and say, "Hey, this feature that you introduced introduces a new bug." And then we're like, "Oh, we hadn't considered that. Let's address it, merge it." And these kind of stack on top of each other. But then what we'll do is run it against the entire branch. And what we've been doing lately is running it against the entire branch without all of the previously acknowledged issues, so that it's a fresh pair of eyes before we submit it to audit, just in case. During the development cycle, there might be some acknowledged issue that was relevant earlier but not relevant later for some reason. We just get a completely fresh view, and then we'll address anything we need to and then ship to audit.

Gio: Nice. Okay, great. That's super helpful. So active PR reviews and hardening are the two top things for you. That's awesome.

Has Octane affected your relationship with manual auditors? Or maybe you perceive the value of receiving a manual audit differently? Or do you still see it as exactly as it was 12 to 24 months ago?

Lucas: I see it as better than it used to be, which is cool, because it's basically like we're getting more value out of them. You book an auditor for a week — before, it's like they've taken two days to get acclimated to the codebase, asking about assumptions, all that kind of stuff. On the third day, they're doing a lot of automated tooling kind of stuff. And then maybe the last 30 to 40%, it's like, okay, they've cleared out all the obvious things and now they're focusing on the really hard things.

Whereas with Octane, it's like all the documentation has been hardened and locked down, all of the obvious issues have already been eliminated. And something that we actually discussed is providing documentation of the issues that were acknowledged by our team, because something that's happened in the past is Octane will find an issue, we will acknowledge it, and then an auditor will come back with the exact same issue and we'll acknowledge it again. So what we're going to start doing now is saying, "Okay, not only have we eliminated all the obvious things, but we're also going to preemptively acknowledge all the things that we think you're going to find as well. Be aware of that." And then what used to be 30% now goes to almost 100% focused on the reason that we hire humans, which is the deep exploratory stuff.

Gio: Yeah, nice. We'll make sure to get you that exportable list of all the acknowledged issues.

Lucas: Yeah. And I just want to say in this podcast — the Octane team has been extremely responsive and helpful. And you see it on this call right now: we're coming up with ideas, giving feedback, and they're just implementing it right away. It's awesome. I love it.

Gio: Yeah, thanks Lucas. That's definitely how we try to move. So glad that's received well. Awesome. Last question for you — and then also feel free to ask me any questions, or otherwise we can wrap up after this. One last thing I wanted to ask was: what would a best-in-class AI-native security workflow look like for an on-chain team like Spark and Phoenix? Picture the world 12 months from now. What does that look like and what makes it the best?

Lucas: You got a lot of ideas. Okay, so — AI-native security workflow. I would say, sort of continuing with our existing AI pipeline of AI-assisted development, AI-assisted PR reviews, Octane reviews, Octane final reviews, and then going to human audit — where I think things will change and get really interesting is actually providing entire live deployments. Basically providing a registry of addresses — "Here is Spark's deployed system on seven chains" — and here is all of the state that corresponds to it. I guess it could just be dynamically loaded.

And then all of the live integrations, all of the funds, where all those are held in custody, the access control, everything related to the live system. And then it would first try and do some sort of AI-generated hyper-fuzzing — exploit scenarios — really trying to figure out every conceivable angle to extract funds from the live system. And then reviewing the code that we're planning on upgrading to — basically performing that actual procedural upgrade on mainnet against some kind of fork, and then during this insane fuzzing, exploit, and invariant campaign on the results — and yeah, basically just almost proving that you can't exploit the resulting protocol in some way. Can you ever really prove it? But we can get to a point now with enough compute and enough resources that it's extremely comprehensive. So I think that would be an amazing workflow for us.

Gio: That's awesome. That's super great. Yeah. Thanks, Lucas. I really appreciate it.

Lucas: Yeah. That's everything from my side.

Gio: Cool. Well, that's it from my side as well. This was great. And we have some new products to get to work on together. Awesome. Thanks again, Lucas.